INSPIRED

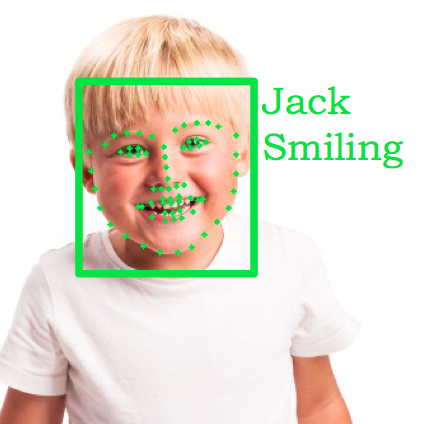

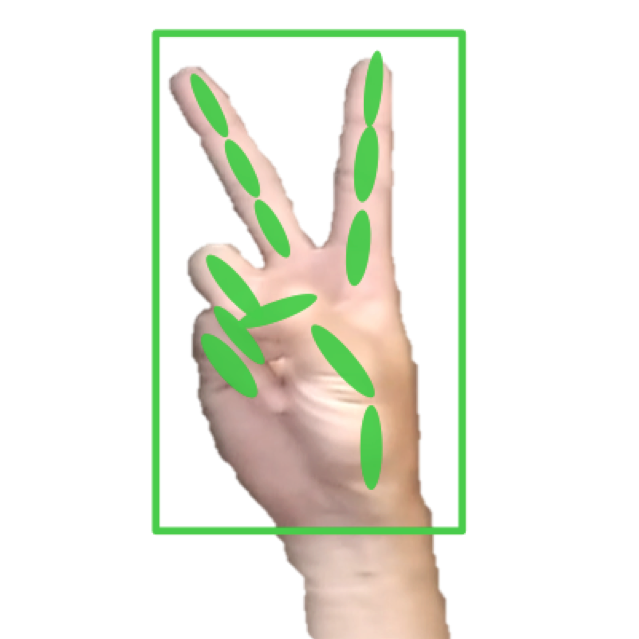

Machine Vision System

Deep neural network based embedded machine vision solution that helps drones, toys, service robots and other consumer electronics products to interact with human more efficiently and autonomously.